How it Works & Consensus FAQ’s

Have a question about science, health, fitness, or diet? Get cited, evidence-based insights with Consensus.

Try for freeWhat is Consensus?

Consensus is an academic search engine, powered by AI, but grounded in scientific research. We use language models (LLMs) and purpose-built search technology (Vector search) to surface the most relevant papers. We synthesize both topic-level and paper-level insights. Everything is connected to real research papers.

What does Consensus search over?

200M+ Research Papers

The current source material used in Consensus comes from the Semantic Scholar database, which includes over 200M papers across all domains of science. We continue to add more data over time and our dataset is updated monthly.

How should I format my search query?

Here are some examples below, you can also check out our help article: How to Search

- Try single-word searches to start learning about a subject or topic: Avocados or Cancer.

- See how two concepts relate to each other: Magnesium & Sleep or Vitamin C & colds or Impact of climate change on GDP

- Ask ‘Yes’ or ‘No’ type questions: Does lack of sleep increase Alzheimer’s risk?

- Add an instruction for Copilot with your search: Does immigration improve local economies? Group together pro and con cases

How does a Consensus search work?

We run a custom fine-tuned language model over our entire corpus of research papers and extract the Key Takeaway from every paper. We then remove things like ‘what’, ‘is’, and ‘are’ from the query and run a combination of keyword search + Vector search over the abstract and title of all our papers. This gives us a very intelligent measure of the relevance of a research paper to your query.

How are results & their relevance determined?

This relevance score is then combined with many other pieces of metadata including but not limited to citation count, velocity of citations, study design, and publish date to re-rank the results and produce a top 20 possible results to surface.

How can I use the Consensus Copilot?

Consensus Copilot brings ChatGPT-type functionality to Consensus. Along with your search, you can have Copilot answer questions, draft content, create lists, and more. Just tell Copilot what to do along with your query.

For example:

Unlike generative LLMs like ChatGPT, Copilot is connected to the science. Each number refers to the research paper that the information is drawn from. Click on the number in Copilot and you’ll drop down to the research paper (also with that number) on your results page.

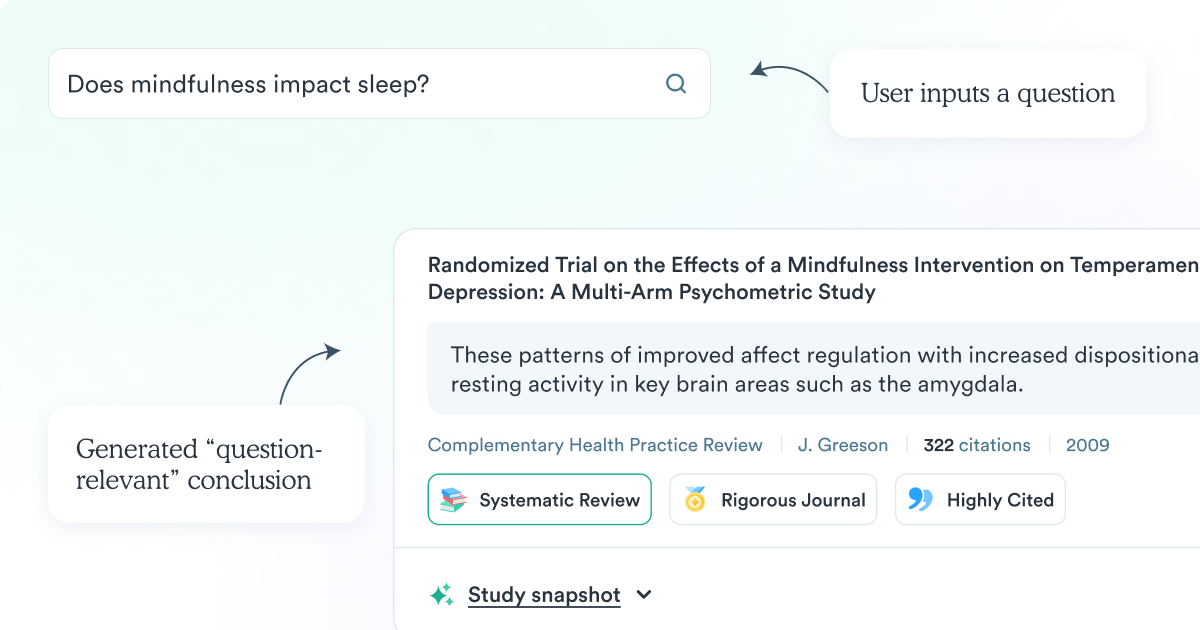

How are question-type search queries answered?

When you input a question or a phrase (i.e. the benefits of mindfulness), we then run a custom fine-tuned language model that generates a question-relevant conclusion based on the user query and the abstract of the given paper. For every other type of search, we use the Key Takeaway that we extracted already.

How is the search result ranking determined?

This is the final order in which the research paper results appear on the results page. With this new list of 20 results (either the generated conclusion or the Key Takeaway), we run a final custom fine-tuned language model built for question and answering that ranks the results according to how well they address the user’s query.

How is the summary & Consensus Meter powered?

For a question or a phrase search, with enough relevant results, we then run OpenAI’s GPT-4 large language model over it. The top 10 results produce a simple one-sentence summary of the top studies to your question. This generated summary can be seen in the Summary box in the top left corner of the results page.

If you have input a yes/no question into the search engine, with enough relevant results, we then run a custom fine-tuned LLM that classifies the results as suggesting either “yes”, “no” or “possibly” to your question. The aggregated results of this model can be seen in the Consensus Meter in the top right corner of the results page.

What academic features are in Consensus?

🔍 Search Filters: Filter by sample size, study design, methodology, if the paper is open access, a human or animal study (and many more filters).

✨ Paper-level insights: We extract key insights and answers. Locate the most helpful papers and digest their insights faster.

🧑🏽🤝🧑🏽 Study Snapshot: Our Study Snapshot quickly shows key information like Population, Sample size, Methods, etc. – all within the results page.

🥇 Quality indicators: Focus on the best papers – intuitive labels for citations, journal quality, and study type.

🎓 Auto-citations: Consensus auto-creates citations in multiple formats, we also integrate with Zotero, Paperpile, & soon Endnote!

💻 CSV Export: Export search results (and soon Lists) to CSV. Includes 11 paper-level detail columns & the paper’s link.

📋 Lists & Bookmarks: Stay organized, and save lists of papers or whole searches. Export search results (+paper-level details) to CSV.

What topic overview features are in Consensus?

✨ Consensus Meter: Quickly see the scientific consensus & gain topic context and direction.

✨ Consensus Copilot: Simply include in your search – ask Copilot to adopt a style, draft content, format, create lists, and more. Read a referenced topic synthesis.

Who made Consensus?

The Consensus team! Read more about who’s on the team here. Christian Salem and Eric Olson are the Co-founders of Consensus.

The Consensus mission is to make the world’s best information accessible to everyone.

Find the best science, faster, with Consensus.

—

This page was created based on feedback from Consensus users.

Is there an FAQ we missed? Email us with your feedback!

Have a question about science, health, fitness, or diet? Get cited, evidence-based insights with Consensus.

Try for free